Illegal software allowed to be used for just cause?

On

On

The police were able to find proof the computer once contained child pornography with the use of a keylogger. A keylogger is an illegal spyware application that saves the keyboard actions of the user. A keylogger is installed without the knowledge of the user by means of a virus or a software package containing spyware. A keylogger is walware and for that reason against the law.

Just for the record, I am 100% against any forms of illegal pornography and am very pleased Catterson is charged. Yet, I am not completely sure the actions taken by the police are proper. If a keylogger is illegal then it is not right when authorities make use of it, even if the cause is just. I assume the walware was installed before the police confiscated Cattersons computer, still, I recon the use of it is questionable and perhaps even illegitimate. What if in the future someone gets arrested on milder charges, say possession of Spiderman 3 in divx format: is the police allowed to use spyware to gather evidence?

I recon the British police did not put the keylogger records forward as evidence, but used it to force the suspect to confess, still this may lead to further breaches of privacy; following this logic governmental authorities may distribute walware and use it, as long if it is not put forward as proof but used as a means to pressure suspects.

Luckily I saw Spiderman 3 in the cinema… actually let me rephrase that…. sadly I saw Spiderman 3 in the cinema… I wasted 10 euro’s on a terrible movie.

This blog is a real magnet for pingback spam lately. While I’d like to take it as a sign of our growing popularity, that would be like being flattered by calls from telemarketers. Also, it probably says more about the arms race between spammers and spam-filters: the trend for a while now is for spammers is to use RSS feeds to syndicate. Now excerpts from our blog posts end up on spamblogs, where spammers include Google Ads and wait for the money to roll (or trickle) in. It’s all automated, and ends up looking like this:

But here’s the interesting point. Two years ago, Google implemented the nofollow html attribute to prevent this very same comment spam. Nofollow is the default setting for comments on blogging platforms, meaning links placed in blog comments (including pingbacks) do not ‘count’ in search engine rankings. It is overwhelmingly obvious that as a prevention mechanism, it simply doesn’t work – spamblogs and comment spam are just too easy and cheap. What nofollow does do, though, is help keep Google’s search engine rankings stable. If Google is serious about preventing comment spam, wouldn’t it make more sense to prevent these guys and girls from getting accounts on Google Ads?

Although I’m not really sure what this is all about, on page 3 of the Google search query on the spinplant I got the following: In response to a legal request submitted to Google, we have removed 1 result(s) from this page. If you wish, you may read more about the request at ChillingEffects.org. On the Chilling Effect page it is argued as a ‘Dutch defamation complaint to Google’. See the screenshot below for what I got. So basically, in keeping the Spinplant alive, there is also a movement trying to keep it completely out of the Google search.

The link: Google Spinplant Search Result Page no. 3

Dérive is a notion used by Guy Debord in an attempt to convince readers to revisit the way they looked at urban spaces. The concept means to aimlessly walk, or drift, through the city streets being guided by the momentum and space itself. A modern practice of Dérive is roaming the streets of your city through the satellite photographs in Google Maps and more recently Google Street View; a new feature of Google Maps that allows one to view and navigate high-resolution, 360 degree street level images of various cities (in the US). Google’s maps distinguish themselves from traditional printed maps in the sense that the user is able to interact. Besides zooming on location, the user is able to demand additional information with concern to a particular spot. This information is offered by parties collaborating with Google, as well as information from databases which Google has power over. Google Maps became vastly popular when it integrated satellite photographs (and photographs taken with airplanes) in its online maps; beside a map in conventional design containing information on demand, the map now presents a realistic bird eye view allowing the user to rediscover familiar places (such as his/her own house) from an unfamiliar perspective.

The basic premise in Debord’s theory of Dérive is that people are trapped in the practices of everyday life, by looking at the city by following their emotions they can break with their daily route, routine and enclosed space. Cities in fact are designed in ways to direct and control its publics. Cities are complex structures in which movement and mobility is managed by its plan, for instance road signs tell one where to go at what speed and where to not go between what times, when to stop and when to continue. But also the architecture controls the flow of people by means of the way in which certain areas, streets, or buildings resonate with states of mind, inclinations, and desires. Debord argues that people should explore their environment without preconceptions, in order to create a better understanding of one’s nature; as one becomes aware of its location, one can value and comprehend his or her existence. The idea is that people built forth from their insights and seek out reasons for movement other than those for which an environment was designed. Bringing an inverted angle to the world can make people assign new meanings to familiar places, produce new forms of social interaction and make public space a place where one stops to look.

This idea of (re)discovering familiar places can be compared to taking a boat tour through ones own city. The roads beside the eight canals in the center of Amsterdam are passageways I personally frequently travel through; however, when passing through them by boat, the well-known monumental facades in the vicinity of the canals seemed foreign to me from a different angle. Similarly the satellite photographs in Google Maps changes meaning and memories attached to common places; it gives the user an experience of re-familiarity. Street View on the other hand draws on the recognizable element; the photographs are taken from street level and thereby rediscovering is substituted for virtual sightseeing. The user can now wander through New York while staying at home; moreover, the user can zoom and alter the view at any time. Instead of looking up the fastest route or determining ones location, the function seems to have shifted in the direction of roaming and aimless wandering.

In addition modern maps are coupled to databases consisting of location bound information; possibly delivering the user knowledge and ultimately awareness. A wide variety of peer-created extensions are freely available augmenting the information and increasing the amount of knowledge, such as the Wikipedia extension – which provides a sense of temporal accuracy in Google Earth because information is provided about history and coming into being of a particular place, complete with specific dates, adding to the hyper-real situation. The practice of contributing to the medium opposes with traditional one-way media institutions. Google Earth allows users to act upon their creative skills and knowledge by offering possibilities to co-create the product and make it available to anyone, also outside the community. Google Maps API is a tool which users of Google Earth can use to include whichever information to existing maps offered by Google. In addition Google offers users SketchUp, similar to Google Maps API SketchUp is a free application with which users can add content to maps presented by Google, however with SketchUp the user can do this in 3D (for example a model of ones own house). Via Google 3D Warehouse the models can be uploaded and made available for all users of Google Earth. Currently maps are circulating in 3D or data tips containing personal information or photographs taken by users from a street level (which consequently changes the perspective of the original design). Information visualization tools such as maps enable greater understanding of reality, our society, life, and in short our existence. The accessibility and popularity of dynamic digital maps should make academics and interaction designers wonder how new ways of wandering can educate, emancipate, and enlighten the masses.

Introduction by Ole Bouman:

At the NAI, values of architecture are defended that we are fond of to defend. Most architects and policy makers do belief that architecture is about shelter and enclosure, occupation and representation. Archiving architecture used to be at the core of the NAi, but it has to look at what is happening with architecture now and look beyond the traditional field. New questions arise about how new technologies affect architecture and what this says about the individual? It is not solely about designing and archiving our world anymore, but also about looking at new possibilities. Discussions with other disciplines here are very valuable.

What happens in the merging of physical and digital space? Is locative media a successor of net.art? It is about creating experience in real locations with digital layers. Nowadays GPS phones and ad hoc networks create a new experience of place. This experience of place is no longer only the domain of architecture. Interaction designers, gaming, media makers and artists are now moving into that space. Through architecture, we can define ourselves as human beings. At least, we used to. New technologies are defying these standards, this paradigm. There is fluidity in how we create representation. New, locative technologies empower a nomadic life; new ways to organize our spaces. Is it also affecting the way we look at ourselves? We have to think about our position vis a vis our technologies and societies. Roughly, two kinds of audiences can be distinguished (within the conference); one of curiosity and openness versus one that is procrastinating- hesitating towards technology. This dichotomy is a typical western position. Where modernity may no longer be on our side and technology is at the core of our daily life, we need people to help us conceptualize and define new concepts of urbanity and social interaction.

Lecture

The book ‘Sentient cities: ambient intelligence and the politics of urban space’ by Stephen Graham is a reflection on politics, locative media and ubiquitous computing. Where technology fuses itself into the background of daily life, all sorts of scenes (art-commercial- governmental etc) are utilizing new technologies and seeking combinations, weaving them into certain directions simultaneously. We are moving towards a society of enacted environments. Phenomena like an internet of things, sensor-databases, biometric sensing, ubiquitous computing, machines linked to senses and databases etc are already dawning. All these infrastructures are constantly at work (or will be working) in the background in cities, arranging all kinds of privileges, possibilities and accesses. We don’t know where these servers are located, how it is stored and who keeps watch of them. All this data decides what we can and cannot do in a city, where we have access, where we can move. All is profoundly political. All levels of this infrastructure are politicized.

Computing is becoming everywhere, urban spaces are brought into being that have a computable layer. A critical question posed is: What is exactly new about this? We need to be aware of our history and the continuities and changes in societies and technologies in order to see the real and important developments.

Three starting points are addressed by Graham:

1. We must completely abandon the notion that there is a real and a virtual world, as if the two were opposed. Instead, we must look at how new media is layering over existing spaces, thus reorganizing them. Graham is building on the notion of Bolter and Grusin; remediation. It is constituted (the virtual) on top of our real world. Remediation is taking place constantly. Remediation of painting, film and television, of cities, houses and streets. The old notion of holographic pods, parallel worlds, cyberspace, does not exist. We are far from it.

2. Cities can be seen to emerge as fluid machines. We have to look at cities as processes. intense connections, constantly mixing. distant proximity and proximate distance in all sorts of ways. All sorts of flows are present in a city (data, people, services, all is about movement). These flows of energy, water, people, information, goods etc all are linked and are constantly influencing each other. Seeing cities as processes, we have to think about how new media fits into the process.

3. We must take a look at when and how technology becomes a part of our infrastructure. Everyone is using technology without thinking about it (like electricity). The most profound technologies are those who disappear into daily life (Mark Weiser). Now politics become important, but less visible.

Socially, these technologies become ‘black-boxes’; they become ‘engineers stuff’. So, what is infrastructure precisely? It is embedded, sunk and transparent into daily life. It links times and spaces. We have to learn how to use it. It has to be based on standards. They (technologies of infrastructure) become only visible when they fail. Graham wants to tell three stories about ubiquitous computing and locative media:

1. consumeration

2. militarization/ securitisation

3. urban activism and democratization

1. consumeration

Is there an ideal friction-free capitalism? Within the control revolution, the commercial world wants to take the internet and fix it down to local geography in order to achieve a data-driven mass costumisation. Exploiting of this possibility will occur very soon, based on a database model (like the Amazon recommendation model). Imagine a real time monitoring of consumers, where all your favorites and bookmarks in physical life are tracing and actively drawing your attention constantly. Layering new media onto the city creates a lot of commercial opportunities. Market places are emerging from mobility, where everyone is having a perfectly tailored capitalism.

An example is a RFID and logistics. If this is to work, users have to adopt – windows for instance has an AURA applications- barcode readers on their phone- an augmented consumption. Try to impose markets where these markets were not possible before. More as a commodity than as a public good. One can, for instance, start to commercialize roads (as an example of capitalizing mobility). This is done by controlling access. Building premium options to bypass nasty things (like congestion). Even adds alter along the way dynamically. Summarizing, some basics of the life of the city will be exploited via locative media.

Another example mentioned is the city center of London and the access to it. Lots of infrastructure is needed to make sure access is denied and offending are fined; mass customization in reverse. It demonstrates the difference in possibilities and politics; they are not defined by the technology, but rather by the politics of dealing with that technology. Even internet-traffic use is prioritized rather than everyone (and all data) being equal. An example of this is an imposed new ‘smart’ internet seen by Cisco, where only prioritized data will be able to travel from computer to computer. Some networks and/or routes will be unavailable for the masses. Another example is call-centers – companies realize that congestion is the problem – when you are deemed profitable, you are granted faster access. This is a new politics of technology.

What happens when architecture, new media and rfid are meeting? Lots of politics and privatized spaces. Location-based services arte the first in showing these politics. Consumer- databases are being used to create ins and outs, have and have-nots. Geography of cities are now managed by geo-demographics. Info about social networks, crime-rated, local governments, recommended neighborhoods and so on are already in use.We will see mayor social databases to influence your choice (when you are literate in this info-world). This underpins politics of data.

2 militarisation/ securitisation

Much in this point is around the war on terror. where the city is deemed the problem, with supposed enemies. How can we use our technology! Panicky in addressing risks in western cities within security world. This is a world of targeting, about locating and targeting enemies. Huge recognition and data mining technologies and biometrics. CCTV and face recognition etc even identifying walking styles. It is always about creating an average, in order to pick our the abnormalities. We move towards code-space and software-sorted mobilities. We are already moving into biometric systems. All of this with the argument to limit terror. Lots of commercial gain here.

About cctv, lots of cameras are privately installed. Security companies and military are investigating how they can be linked together, how they can be computerized? The politics of this are enormous. Think about your anonymity on the streets that would be lost.

Also, the oyster-card for example is mentioned and the misuse of this, typifying the link between commercial and surveillance use. Once the system is in place, it can be used for other purposes than intended. How can you make regulation robust enough to prevent misuse?

As an example, Graham mentions the American army admitting they need a new “Manhatten project” in order to allow tracking and locating targets in asymmetric urban warfare. This is the point where everything becomes war-space. The American army uses non-arguments for make the city a warfare-territory and they need locative media. Lots of these developments are moving into civilian space. One example is the DARPA ‘ combat zones that see’ project, where concepts of smart cams etc. are introduced. It is a techno-utopian fantasy, but one that is becoming more real every day.

Jordan Crandall talks about tracking and tracing technologies that are trying to capture and colonize the future. A war on statistical persons is emerging. Locative media is constantly looking for the now thus is constantly ahead of itself. Militarization views collapse identification and turns it into databases.

3. urban activism and democratization

This point is about reanimating and re-politicizing the city. Deeming with the problem of alienation, can we actually bring urban politics back to rigid social and political questions and interaction? Re-appropriating technology is the key. Sources often start military, then commercially exploited. After that, its real or alternative use must be sought. New social performances strive for re-enchantment, more interactive model of participatory democracy. Graham now quotes from Shivanee: “locative media and the viscosity of space”.

Examples mentioned are tagging the city, like click- able environments, graffiti and physical hyperlinks. Lots of digital collective memory and narratives are emerging in physical space. Also, revealing bodily mobilities e.g. urban tapestries example. Also, examples where the yellow-arrow project is mentioned – guerrilla mapping, re-visioning the streets. The main point is that it is all about visualizing politics of planning in new ways. It is a form of relational architecture where digital interaction can mix with local events. Urban screens are mentioned as a link between internet and real-life city urbanism and space. All these projects attempt to render all the network activity visible. This shows a politics of data and geographies of data. We need to reverse engineer data to understand what is happening and to adjust politics of this data.

Conclusions

Multiple visions of all sorts are struggling with these new technologies of locative media. There maybe some different dynamics, but all are efforts of remediation. Graham argues there is a relationship between these multiple pass- ways, overlaps are to be found. Is there a healthy co-existence of, for instance, the artist and the commercial view? And how will this be shaped? It is about an emerging urban and tech politics. The world of temporality is very important in the process of delegating agency top software? What political and social assumptions go into our software. Making these thing possible is very important.

Hi all,

I started working on our t-shirts, here are my first two suggestions:

T-shirt 01: Tetris style (t-shirt_mom01.pdf)

T-shirt 02: Floppy style (t-shirt_mom02.pdf)

Comments? Suggestions? (Black and white & vector-based)

PS: Too bad we don’t have our own URL yet, we could print it on the back.

I want to post a discussion question here about the use of the classic philosophers (ie Aristotle, Plato, etc.) and the link with New Media studies. And also a call for links on this subject, if anyone knows any.

This question came to me when I was reading ‘The Six Elements and the Causal Relations Among Them’ by Brenda Laurel. She talks about the links between Aristotle’s Poetics and Human-Computer interaction. She does give us some striking examples, like the link between characters in a play doing things “out of the blue” and a word processor with an automatic correction doing things “out of the blue” (see your T9 mobile textbook perhaps).

I’ve also blogged about Plato’s Republic and the history of the internet. And the links are quite remarkable when you read the original text by Plato. I thought I was reading the history of the internet itself, written by Plato himself. But the question is, are we seeing things they never really meant while writing? Is that even relevant? Or are we seeing patterns that dominate life through the ages? Or…?

I’m getting rather tired of people ranting on about the inferiority of text-based conversation such as MSN, ICQ, AIM, Yahoo! messenger, G-talk and IRC. The prevailing opinion seems to be that face to face communication is hands down superior to online text conversations, because face to face includes body language, intonation, facial expressions, physical contact and so on. The story goes that consequently there is a huge information loss during online communication because of the aforementioned features lacking in text-based conversation. Efforts to bridge this gap, like the usage of emoticons in text-based conversation, merely constitute a poor substitute.

Another notion going hand in hand with this view is the belief that it is ‘better’ that a person spends time outside of the house, meeting people face to face at bars, clubs, fraternities, sports teams and so on. I think this trend has been going on ever since television addiction became a social issue, of people ‘wasting their time’ on their own as opposed to being socially active. It is the reigning (conservative) way of viewing human contact, to a point that everyone can’t feel but a little guilty or ashamed that, when asked where you were last saturday night, you have to answer “behind the computer”.

You should really check out Photosynth, a new piece of software which creates 3d models using regular 2d photos of a certain object, for instance San Marco square in Venice. No I’m not biased! ;-) This thing really rocks…

No longer just a metaphor for how we consumers fall for anything, the Whatever Button is now available as a Firefox add-on (Great big thanks to Erik, who coded it).

More about the button below.

Partly in response to that stumper from a while back, ‘What’s a blog?’, this is another: What is interactivity?

I’ll start with the top result from a “define: interactivity” google query:

If your Web site is not interactive, it’s dead.

I really like this definition. It gives a sense of urgency – as in, “I want my website to be alive!” – without really saying anything. With its knowing inadequacy, the definition serves as a reminder that I don’t just want an interactive website, but also an interactive phone, an interactive television and of course – with every reference book I buy – a ‘free interactive CD-ROM’. The list goes on: I prefer interactivity when it comes to education, to politics, and to my social life. And more than anything else in this world, I really want an interactive pet robot.

Introduction

Yesterday the workshop, this morning the start of the two-day “Video Vortex – responses to YouTube”, an international conference organized by the Institute of Network Cultures at PostCS11, Amsterdam. A good crowd fills the hall at the 11th floor of the ex- Dutch postal service building, all waiting for the first session to kick off. When everyone has found a seat, Geert Lovink opens the session and the two-day conference has started.

[all photos by Anne Helmond]

This session covers, as the program booklet states, the YouTube era we’re living now, where video content is produced bottom-up with emphasis on participation, sharing and community networking. But as companies like Flickr being consumed by Yahoo, YouTube by Google, the question rises what the future is for the production and distribution of independent online video content. How can a participatory culture achieve a certain degree of autonomy and diversity outside mass media? What is the artistic potential of video database and online filmmaking?

Tom Sherman

The first to speak is Tom Sherman. Sherman is an artist and writer and professor in the Department of Transmedia at Syracuse University in central New York. He has represented Canada at the Venice Biennale, performs and records with the group Nerve Theory, and received the Bell Canada Award for excellence in video art in 2003. His most recent book is Before and after the I-Bomb: an Artist in the Information Environment (Banff Centre Press, 2002).

Vernacular Video

In his presentation, Sherman gives a nice overview of the forty years of “video evolution”, in which video art has lived many lives. Born out of television, video decentralized this medium by allowing consumers to electronically capture, record, process, store, and reconstruct a sequence of still images. The evolution of video has been fuelled not only by this capturing and storing, but also by the development of display techniques of which the future will bring us thinner displays (as thick as paper), which makes the ubiquity of displays possible (video watches, videophone (iPhone)), etc.). Distribution and exhibition of video is transformed into file sharing and transmission.

Is video a tool or an art medium? According to Sherman it is both. Video can be an art medium, just as it is used for video conferencing, video dating, video surveillance, video gaming etc. So video art is a way in which the “tool” video is being used.

In the late 60-ies, video was a process not a product. You’d capture the moment, analyze this in the initial playback, and then re-record over it. Videotape is not television (VT = not TV), but now with the overwhelming online video that is available, and people watching tv-episodes online next to “home made” videos, television is loosing its higher ground. As in many forms of art, video art as an art based on aesthetics is now in a period when “life waltzes over it”, like a tsunami, depriving it (perhaps) of its aesthetics, its rules.

As Sherman is talking about the history of video art, he shows that video art has had a repeated near death experience, as it is difficult at times in its 40 year existence to commodify and only kept alive by the following ongoing life support systems:

– Educational institutions

– Festivals

– Museums and galleries

– Television network cable and the web

– Video publishing

– Collectors

– Governments and foundations

– Nightclubs music video and video culture hybrids

Video Art vs Vernacular Video: art becomes conservative

Video Art started the development of its aesthetics (internal logic, set of rules for making it) as a response to television. It was then avant garde. But with the rise of vernacular video (peoples video from their points of view), video art seems to attach itself firmly to traditional visual art, media and cinematic history in attempts to distinguish itself from the broader media culture. Video art becomes “rear garde”, it becomes conservative.

The characteristics of vernacular video (according to Sherman):

– Displayed recordings will continue to be shorter and shorter like tv and advertising

– Use of canned music will prevail

– Video diaries will become important, voice over will replace writing

– More road films, travelogues

– More extreme films

Where Video art “is” aesthetic, Sherman sees vernacular video as anesthetic: we don’t follow rules with recording. As said, video art was response to television, now the Internet is replacing TV, thus video art will be a response to the web.

Read Sherman’s article Vernacular Video.

The second speaker is Rosemary Comella who has been working since 2000 as a researcher, project director, interface designer and programmer at the Labyrinth Project. As part of Labyrinth, she developed the interface for Tracing the Decay of Fiction, a collaborative project between experimental filmmaker Pat O’Neill and the Labyrinth team, and she helped direct The Danube Exodus: The Rippling Current of the River, an interactive installation with filmmaker Peter Forgács. She also developed Bleeding Through: Layers of Los Angeles, an interactive installation and DVD-ROM, in collaboration with cultural historian Norman Klein and the Center for Art and Media (ZKM) in Germany. She directed and served as photographer for Cultivating Pasadena: From Roses to Redevelopment, an installation and DVD-ROM, including catalog, exhibited at the Pasadena Museum of California Art in 2005. Comella is currently creative director for ‘Jews in the Golden State: A Home-Grown History of Immigration and Identity’, a public on-line archive and museum installation that hopes to illuminate one hundred and fifty years of Jewish history in California through a visually engaging project that invites users to supplement official history with their own histories and memories using text, home movies, photographs and ephemera.

Home-Grown History

In her presentation Comella put emphasis on the relation between narrativity and the database. As content on YouTube seems rather chaotic, a role for artists is to create order out of this chaos in picking and choosing, editing and shaping an anti-thesis of YouTube with discipline spaces that are coherent. Many of Comella’s projects have been participatory but in a more disciplined way. Participation of specialists and non-specialists.

Comella shows some of her work, of which two I will mention here. The first is a project she made in collaboration with Pat O’Neil, known for his participatory-style work, called “Tracing the Decay of Fiction”. The interface of the interactive DVD let’s you navigate through the rooms of the Ambassador Hotel in Los Angeles. It contains the now locked ballroom where Sirhan Bishara Sirhan assassinated Bobby Kennedy. J. Edgar Hoover, Marilyn Monroe, Howard Hughes, Jean Harlow, John Barrymore and Gloria Swanson once lived there, and with this DVD you can see footage that was shot over the last 30 years (numerous movies and scenes have been shot there). For generations of moviegoers and television consumers, these names and events have seeped into our consciousness, have become our memories.

Another project is called Home-Grown History. It is a software tool that is being developed at the University of Southern California’s School of Cinematic Arts, in collaboration with a number of partners. The Home-Grown History, software concept stems from earlier projects that combine personal and public archives to visually and aurally represent cultural histories of a particular place over time. In this latest incarnation, the software will be applied to the project Jews in the Golden State: a Home-grown History of Immigration and Identity that will work both as an on-line archive and traveling museum installation. The tool and concept can easily be applied to other communities. The concept is to facilitate the creation of a profound social space and structure for encouraging a productive dialogue between personal stories and public histories, in a way that will be useful and pleasurable to both the academic historian and the general public.

This session ended with a presentation by Florian Schneider, a filmmaker who has been involved in a wide range of projects that deal with the implications of postmodern border regimes on both a theoretical and practical level, over the past ten years. He is one of the initiators of the campaign Kein Mensch ist Illegal at documenta X in 1997 and subsequent projects such as the Noborder Network and the online platform kein.org. He developed and co-organized several events, including Makeworld (2001) and Borderline Academy (2005). Currently Schneider is working on Imaginary Property, a series of texts, films and video installations researching the question: “What does it mean to own an image?” He has lectured at museums, galleries, art academies and conferences worldwide. Since 2006 he has taught art theory at the art academy KIT in Trondheim and he is a member of the PhD program “research architecture” at Goldsmiths College, London.

Imaginary Property

Schneider gave a very good, yet very long presentation of which I will try to give a summary here. After the talk, Schneider promised me to put the transcript of his lecture on nettime somewhere next week, so make sure to read it (and check my summary for mistakes;)

The summary: Digitization of our ‘lives’ has irrevocably changed our image of ownership, and property. Self and ownership have a whole new arithmetic. Was the old, bourgeois conception of property characterized by anonymity and objectivity, (the relation between you and the object you may or may not own), today’s immaterial production, digital reproduction, and networked distribution create the need for property relations to be made visible in order to be enforced (now the relationship between you and property has changed to you and other users who can play with these images). We need to believe that property is still around in order for capitalism to work.

Property exists first of all as imagery (logo’s, branding, images as someone else’s property) and rapidly becomes a matter of imagination. A contrary way of reading “imaginary property” could also be understood as the expression of a certain form of possession or ownership of imaginaries: It opens up to the question: “What does it mean to own an image”?

Schneider sees a problem in the massive expropriation of images that is presented to us as web2.0: as soon as you upload, you sign the agreement to give the rights to a corporation. This massive expropriation is a response to p2p networks (they basically do the same) But what is at stake here? Ownership. The new imaginary ownership: replace ‘intellectual property’ with ‘imaginary property’, and see what happens then…

Finally, after a 24 hour delay due to an annual trade fair in Guangzhou I am on my way to Shanghai. While listening to the snoring of my opposite bunk bed neighbor, smelling the noodles of the next door restaurant compartment and watching the rice fields blended with factories pass by, I will summarize some of my experiences and findings so far.

Finally, after a 24 hour delay due to an annual trade fair in Guangzhou I am on my way to Shanghai. While listening to the snoring of my opposite bunk bed neighbor, smelling the noodles of the next door restaurant compartment and watching the rice fields blended with factories pass by, I will summarize some of my experiences and findings so far.

My research started in Hong Kong where I stayed for 4 days. Back home I had arranged a meeting with some legal experts that have been consulting prominent foreign IT companies operating in China. During an extensive dim sum lunch they told me a lot about the current possibilities or rather restrictions that Chinese companies with international ambitions have. It is difficult especially for the smaller companies to expand overseas because it is rather hard to get money out of the country unless you are in a joint venture or listed in a different country. An interesting remark that one of the legal experts made, was:

“The Chinese government is only capable of making restrictions, it is simply too busy to encourage companies to go international”

The 3rd day of my stay in Hong Kong after meeting several experts and people that could possibly help me with some relevant guangxi (connections), I realized that I needed to have some business cards made. Naturally this is very easy to arrange in Hong Kong. In a back alley somewhere in Central my cards where finished within a day. The next morning it was time to head off to Shenzhen where I could proudly present myself as being an official ‘New Media Researcher’.

In Shenzhen I had set up appointments with Tencent’s Richard Chang, Technology Strategist U.S.Office, Tristan Han, Sr. Product Manager International Product Center, and Thijs Terlouw, a Dutch developer working at Tencent’s innovation center. Tencent is one of China’s biggest Internet service portals. One of Tencent’s most popular products, QQ, an instant messaging platform, is used by tens of millions of Chinese Internet users. Furthermore Tencent offers community aplications, search services and game oriented products.

In Shenzhen I had set up appointments with Tencent’s Richard Chang, Technology Strategist U.S.Office, Tristan Han, Sr. Product Manager International Product Center, and Thijs Terlouw, a Dutch developer working at Tencent’s innovation center. Tencent is one of China’s biggest Internet service portals. One of Tencent’s most popular products, QQ, an instant messaging platform, is used by tens of millions of Chinese Internet users. Furthermore Tencent offers community aplications, search services and game oriented products.

After a 1 hour drive from the center of Shenzhen where I was staying, I arrived at the Shenzhen High-Tech Industrial Park (SHIP) where Tencent’s head office is located. After Thijs showed me around on his department and blew me away with some of the new applications he is currently working on, it was time to start my interview with Richard and Tristan.

Tristan started by providing me with a brief overview of all international activities of Tencent till now. This was all very interesting, but the reason for my visit was to find out more about the future international developments of Tencent! After enquiring about this, Triston was surprisingly open and told me among others, that Tencent is planning on expanding its activities in Vietnam, India, Thailand, HK, Macau, South Afrika, Japan, Indonesia, the U.S., and in the near future also Eastern Europe. To avoid possible cultural differences for certain applications Tencent makes use of a distinct strategy for every single country it is planning on entering.

Because of the length of this post, but also because I don’t want to disclose too much information of my thesis results I will sum up a few of the more general findings I did during my visit.

– There seems to be a gap or indifference in the amount of people that play games in Asia and in Western countries.

– This gap is resonating through into the culture of applications: Asian applications are heavily influenced by gaming culture, such as collecting icons, dressing avatars, or earning activity points. In the West this is only starting to catch on.

– In general online entertainment is more advanced in Asian countries when compared to the West. In China this is probably due to the relatively low age of Internet users. Western Internet use tends to be more focussed on obtaining information.

– Since the US and European IM market is already mature, Tencent will use a strategy that is primarely focussed on cooperation with local companies and mainly focussed to gaming.

– Tencent will unlikely go international with its mobile services – very popular and in China – because the Chinese technology differs too much compared to other countries; “it is a unique technology” developed by the government (China Mobile).

– Richard Chang has launched internal innovation contests; employees can send all their ideas and win money. Thijs told me that in general the management is very open to new innovative ideas, creativity is encouraged.

These are only a few outcomes of the very interesing interview and tour. An interview that ended with Tristan showing me a roadmap of all Tencent’s international innitiatives, unfortunately I was not allowed to take a picture of this!

Before saying goodbye Tristan showed off one final application: QQ Pet and during loading he tells me

“I haven’t started QQ Pet because in our meeting it will die!”

Talking about increasing the engagement level…… Feeding a QQ Pet will cost special QQ coins that can be obtained through special QQ cards. You can buy these cards almost everywhere including post offices, kiosks, software stores, Internet cafes, supermarkets, convenience stores, and so on. Read more about it here.

Talking about increasing the engagement level…… Feeding a QQ Pet will cost special QQ coins that can be obtained through special QQ cards. You can buy these cards almost everywhere including post offices, kiosks, software stores, Internet cafes, supermarkets, convenience stores, and so on. Read more about it here.

In general I was truly blown away by the level of innovation, the emphasis on employee created innovation, but also the determination of Tencent’s employees. Also the company culture an atmosphere came across as relax, with stuffed QQ animals and ping pong tables everywhere. After the tour and interview I had lunch with Thijs and his girlfriend (who also works for Tencent). We talked about the companies culture and how Thijs likes working in Shenzhen for a much lower wage than most developers in the Netherlands.

![]() At 14:00 I had set up a meeting with a government official in charge of international relations of SHIP. After the lunch I headed off to the Virtual University that was located just 15 minutes down the road. I will not discuss this meeting too extensively. All I can say is that it felt as if I was an important international investor; a huge boardroom was prepared with luxurious sofa sized chairs and plenty of drinks and snacks.

At 14:00 I had set up a meeting with a government official in charge of international relations of SHIP. After the lunch I headed off to the Virtual University that was located just 15 minutes down the road. I will not discuss this meeting too extensively. All I can say is that it felt as if I was an important international investor; a huge boardroom was prepared with luxurious sofa sized chairs and plenty of drinks and snacks.

After being overloaded with a bag filled with 2 kilos of background information (unfortunately most of it in Chinese), and the usual exchange of business cards (two hands!) I was able to briefly interview, Li Xiaodong, SHIP’s international spokesperson, only an hour before a big Korean delegation was expected in an even bigger boardroom next door.

We mainly talked about the international future of the park, encouraging innovation domestically and some other relevant topics. When the Koreans started pouring in it was time for me to leave. I had to catch a train to Guangzhou where I had set up a dinner meeting with an American entrepreneur that consults foreign companies on SEO (for Baidu) and Internet marketing in China.

Un till now my research trip has been very satisfying and I have already gained a deep insight in the situation. I am looking forward to visit more Chinese web companies in Shanghai and Beijing to find out how they are taking on the future!

I would like to conclude this post with a typical remark that Li made:

“in technology we have to follow for now, but we will dominate”

Unfortunately for some very mysterious reason I am not able to access my thesis blog in China. But not to worry, I have been invited by Gang Lu to write about my experiences on his MObinoDE blog, expect some posts there soon!

<update> Please read more about my Tencent visit at MobinoDE! – Pieter-Paul (added: 22/04/08) </update>

http://mastersofmedia.carbonmade.com/

Introduction

Carbonmade is a Web application which allows you to create and host an online portfolio. Creating a portfolio can be a lot of work and take up all your time. Carbonmade offers a service which allows you to quickly set up a portfolio without any knowledge of creating webpages. This sounds somewhat like a paradox, since a portfolio is supposed to be a creative expression of your work and templates are usually restricting.

Thom Meens, “ombudsman” (some kind of public relations person any… Any suggestions for translation?) for the Volkskrant is not satisfied with the current structure of the Volkskrant (a newspaper) blogservice. A member of a pro-pedophaelia political party had a blog on Volkskrant blogs for months and, understandably, they weren’t very happy when they found out. To what extend can Volkskrant be held accountable for publications of the bloggers on their service? Thom Meens wants to have some sort of blog control mechanism. Is this in conflict with an important aspect of public blogging, namely the freedom of the blogger to post what he or she wants? Can a newspaper blogservice ever be a place for free, independent expression?

Read the whole article here (in dutch)

Tasks:

- Check the codes in the posts if the layout looks messy.

- Check the comments through Manage > Awaiting moderation (delete spam: “mark all as spam” > “moderate comments”).

- Update the blogroll.

- Write a post on a new media related subject that you find interesting.

- Moderate our joint gmail account: mastersofmedia.

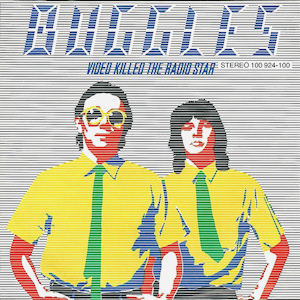

As I was getting ready to turn off the computer, I decided to do some last minute browsing through YouTube. Wondering if there would be any drastic changes after its takeover by Google, I came across an intriguing title: internet killed the video star. A video(?) by a band that consists of members who never met in real life, yet jam together via the internet. They are called the Clipbandits. The three band members live in three different states and time zones in USA.

As I was getting ready to turn off the computer, I decided to do some last minute browsing through YouTube. Wondering if there would be any drastic changes after its takeover by Google, I came across an intriguing title: internet killed the video star. A video(?) by a band that consists of members who never met in real life, yet jam together via the internet. They are called the Clipbandits. The three band members live in three different states and time zones in USA.

Masters of Media presents another audio book review:

Masters of Media presents another audio book review:

Indonesian Transitions. Editor: Henk Schulte Nordholt. June 2006

The book review focuses on an essay by Bart Barendregt: Mobile modernities in contemporary Indonesia; Stories from the other side of the digital divide.

Please tune in:

Second Life is a virtual world that exists on the servers of Linden Lab, nothing is real except the value of real money. Players can open an account and chat with other avatars. The avatars start out naked after which you can shop for clothes at for instance Nike. The money that is exchanged is of the same value in the “real” world. Academics are at this time analyzing the influence of the interaction of a virtual economy with the real economy. Stock analysts are examining investment values of virtual property, the IRS is looking for legal ways to tax players/concerns, and criminals are studying new ways to commit virtual crime/theft.

Second Life has been continuously in the Dutch news this month. It all started with Duran Duran four weeks ago. This long forgotten band from the eighties figured it could make a come-back, a second career, and a virtual success by playing their music for half a million Second Life clients. The obvious reason for this is because the dinosaur aged band members can hide their wrinkled bold heads behind a virtual face lift, the 3D avatars of SL.

|

On Thursday November 16th Julian Kücklich will present his lecture ‘Beyond Narratology or Taking Computer Games Seriously’. Julian Kücklich is co-editor of the latest issue of Fibreculture on ‘Gaming Networks.’

The lecture starts at 4 p.m. In order to allow us to attend this lecture, the session of the New Media Theories class will end at 3.30 pm. |

Check out this eleven minute video of a conversation in a waiting room which is entirely relevant in the context of new media (studies). It’s well worth your time!

I recently worked at a friend’s house and I was trying to connect to their wireless networked called “GORGONZOLA.” I thought that was a pretty funny name until I noticed the names some neighbors had given their networks. One was called PAKISTAN and another was called Jesus Christus is Heer (Jesus Christus is Lord) which made me laugh out loud (OK it actually it made me LOL.) I had previously imagined a network names flame where one neighbor would name their network something provocative and another neighbor would respond to this with an even more provocative name. I wonder if this happened in this neighborhood (notice: pretty good protected neigborhood).

I recently worked at a friend’s house and I was trying to connect to their wireless networked called “GORGONZOLA.” I thought that was a pretty funny name until I noticed the names some neighbors had given their networks. One was called PAKISTAN and another was called Jesus Christus is Heer (Jesus Christus is Lord) which made me laugh out loud (OK it actually it made me LOL.) I had previously imagined a network names flame where one neighbor would name their network something provocative and another neighbor would respond to this with an even more provocative name. I wonder if this happened in this neighborhood (notice: pretty good protected neigborhood).

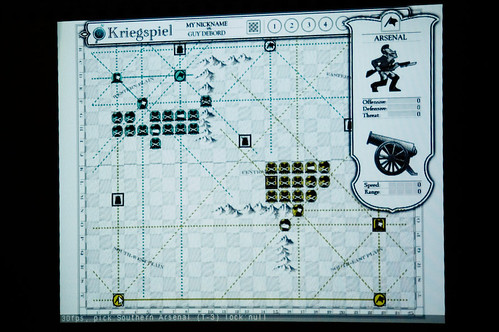

On Thursday night Alexander Galloway, NYU assistant professor and founding member of the Radical Software Group, gave us a peak at his latest project, an online version of the Game of War.

On Thursday night Alexander Galloway, NYU assistant professor and founding member of the Radical Software Group, gave us a peak at his latest project, an online version of the Game of War.

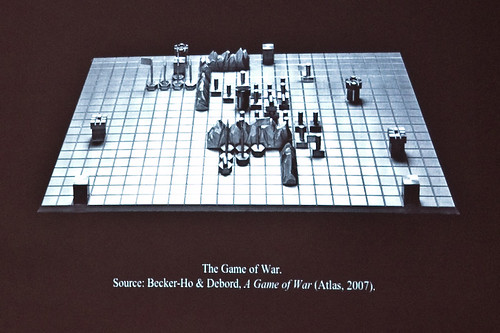

This is a ‘remake’ of a board game created by French Situationist Guy Debord in 1978, a somewhat forgotten departure by the filmmaker and writer so closely associated with the Paris riots of 1968.

Antagonism and algorithms

Before showing the work in progress, Galloway spoke some about his interest in games research, as this relates especially to the ways games model antagonism. He asks whether these can help us think about social and political forms of conflict and struggle. Traditional games assume symmetrical forms of conflict, with two opposing sides of equal ability, but is it possible to model different forms?

Here, Galloway is thinking specifically about the distributed networks he theorized at length in his book Protocol. Such networks recall the rhizome and the radical politics of French Post-structuralism, but also describe the material organization of the Internet. If there are games that simulate the swarm-like behavior of the distributed network, do these provide any clues as to how progressive this organizational form is, or can be?

Modeling the network economy: Starcraft and World of Warcraft

Real time strategy games can be distinguished by a couple of traits: they are continuous and not turn-based, they are often played with a bird’s eye view of the action and they tend to be about resource-gathering in some form or another. For Galloway, the best example is Starcraft (image), which he discussed alongside World of Warcraft. Both of these games clearly display some of the swarm characteristics he mentions, but the key is how and why they do so. Galloway argues that simulation of the distributed network goes hand in hand with that of a different kind of economy.

Real time strategy games can be distinguished by a couple of traits: they are continuous and not turn-based, they are often played with a bird’s eye view of the action and they tend to be about resource-gathering in some form or another. For Galloway, the best example is Starcraft (image), which he discussed alongside World of Warcraft. Both of these games clearly display some of the swarm characteristics he mentions, but the key is how and why they do so. Galloway argues that simulation of the distributed network goes hand in hand with that of a different kind of economy.

As Fleur noted, cooperation on World of Warcraft depends on an engineered scarcity. It makes certain types of collaboration possible and necessary. And one can draw comparisons between, for instance, the strategies the games facilitate and the project-based work of ‘Tiger Teams’ and other post-industrial forms of labor. What the games suggest is that such distributed behavior is not an abstract, ideal form superimposed on reality, but something that emerges from specific material and economic conditions.

Galloway points out that the relationship between contemporary gaming and the network economy goes deeper than this level of ‘play’. It is no coincidence that the machines we work on also house our games: informatic labor and informatic play are continuous, meaning each can seamlessly transform into the other. And while this echoes theories about the dissolving boundaries of ‘private’ and ‘public’, Galloway’s argument stresses the materiality of this shift.

What is the effect of having such a strong connection between a medium (the Internet and gaming) and a means of production (post-Fordism)? Isn’t the multi-tasking, team-working World of Warcraft player ‘training’ to be a better ‘knowledge worker’? Maybe so, Galloway says, but this would be very different to training in a disciplinary sense. Rather, these games are about liberation and desire. They promote autonomy, even if this must be achieved paradoxically through cooperation. So instead of thinking of games as making us better workers, Galloway argues we should look at how they make us better bosses.

Guy Debord and the Game of War

In the last part of the presentation Galloway talked about Debord’s Game of War, its history and about the project to remake the game in online form (what Galloway calls “a massively two-player online game”).

Here is the original version of the game, which Debord brought out in limited edition, fine silver:

Game of War is strangely traditional: it resembles chess in that the board is square and there are two evenly matched opponents, each with the same set of class-based pieces. However, there are also some twists. On the one hand, the board has an uneven topology, with mountain ranges and immobile defensive forts, and on the other there is a strong emphasis on keeping pieces within lines of communication with command centers, or ‘arsenals’ (the communication lines are visible in the online version). In short, not all squares are created equally, and the degree to which a particular area of the board is strategic changes throughout the game.

Alexander Galloway’s java version of the Game of War, which is not finished quite yet:

Debord wrote that he first came up with the idea for the game in the 1950s, and that it “embodied the dialectic of all conflict”. He was fascinated by it, and saw it as an abstraction and perfection of war. Some now go so far as to say it was his most autobiographical work, though it has received considerably less attention than his films and writings. But Galloway says this is changing, and Debord’s game is especially interesting from the perspective of New Media and Game studies.

Game of War leaves behind some questions. Why would a filmmaker like Debord turn to the politics of the algorithm? Given his involvement in the radical politics of the 1960s, what sense did it make to privilege the strategic and logistical aspects of such a game, when he could have developed something more along the lines of the rhizome? In an age of asymmetric warfare, why the fascination with something so symmetrical? Perhaps the Game of War has a surprising move or two waiting to be discovered.

(Photographs courtesy of Anne, see also her summary of Galloway’s presentation)

I managed to update an english wiki on the subject of my BA thesis. It’s been annoying me for years that racial stereotypes are widely studies in all media except for video games. Considering the’re very influential and played by millions of kids there should be a moral standard.

http://en.wikipedia.org/w/index.php?title=Ethnic_stereotypes_in_popular_culture#Video_Games

Some Video games, like the Grand Theft Auto (video game) series, also use ethnic stereotypes. A 2001 study[2] bij Children Now shows that most protagonists (86 per cent) were white males, non-white males were portrayed in stereotypical ways—seven out of ten Asian characters as fighters, and eight out of ten African-Americans as sports competitors, and nearly nine out of ten African-American females were victims of violence (twice the rate of white females). Finally, 79 per cent of African-American males were shown as verbally and physically aggressive, compared to 57 per cent of white males. Other games, like Command & Conquer: Generals stereotype Arabs, which are portrayed as vile, brutal and backward, in contrast to the morally and technologically superior western military.

Besides that you might notice a slight change at http://nl.wikipedia.org/wiki/Media_en_cultuur