Googley Eyes

Microsoft is doing it (Hololens), Apple is doing it (Apple AR), Facebook is doing it (Facebook AR Studio) and last but not least, Google is doing it. Augmented Reality is the future of computing and all the big companies want to be the leader on this new market (The Guardian). In this blog I will be focussing on Google Lens, the AR service of the, in my opinion, most influential company on this subject, Google.

So why Google? Google is the owner of some of the most used software products. Google Chrome is the biggest webbrowser (Statcounter), Google Search is the biggest search engine (Statcounter), Android is the biggest mobile operating system (Statcounter) and Google Drive has the biggest market share on file sharing technologies (Datanyze). Add Google Maps, Google Translate, Youtube and many more services and it becomes difficult to believe any other company can match the amount of user data gathered. Therefore, I think it is important to talk about Google’s view on augmented reality with Google Lens as my main focus.

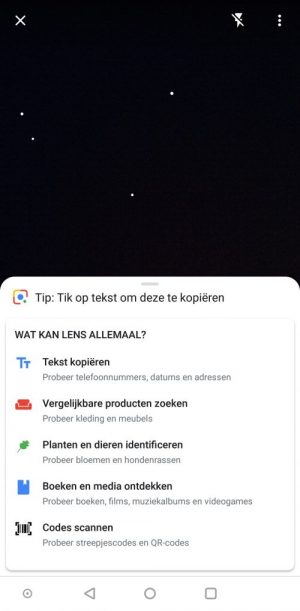

Google Lens is a software that allows users to interact with their environment using other Google core services. Since the last update Lens is able to understand text, find similar products, identify plants and animals, recognize books and media and scan barcodes (picture 1). Altogether, this means Google Lens is able to see and understand a whole lot of your surroundings, or to make it even catchier, Google gave itself and its users a pair of Googley eyes. The questions rise, why does Google want to be in your camera and has Google reached the state of ‘omniveiller’, as predicted by Blackman.

picture 1

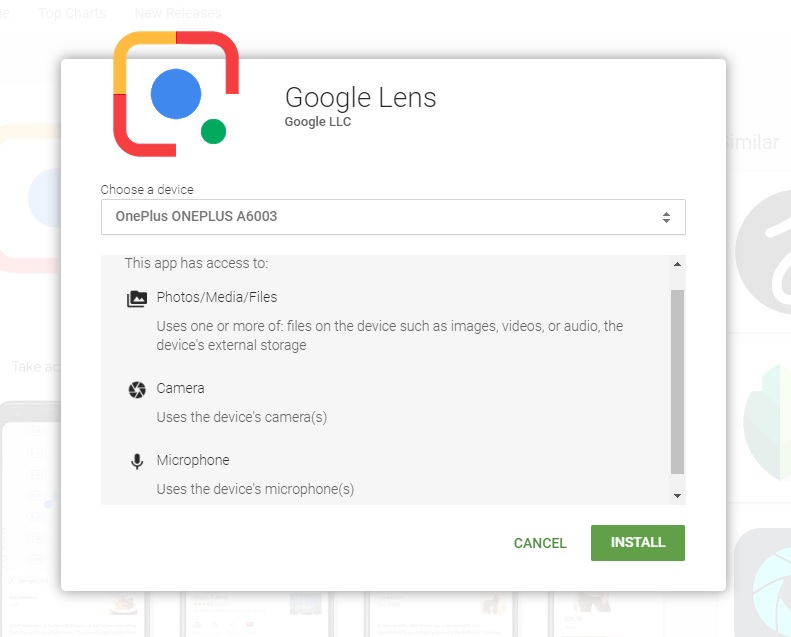

Picture 2

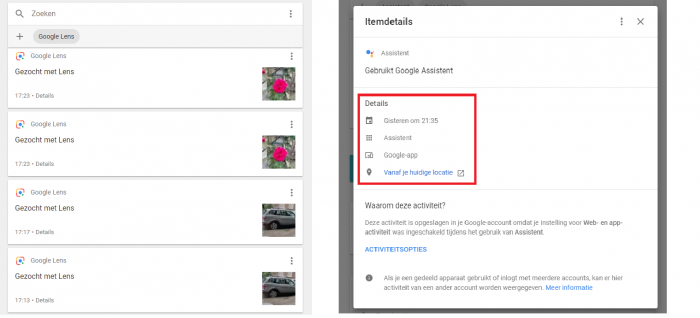

To answer these questions, we have to know what data Google collects when using Google Lens. A quick look at the terms of agreement when downloading Google Lens from the Play Store shows that the app has access to the user’s photos, media, files, camera and microphone (picture 2). It is unspecified whether or not Google saves the data acquired through the Google Lens. But, when going through ‘My activity’ on the ‘my Google account’ page, it turned out that Google stores all the pictures taken with Google Lens and combines it with, location and the information Google Assistant gives about the picture (picture 3). What a shock. This, of course, is nothing new for a company driven mostly by ad revenue (Alphabet Second Quarter 2018 Results). Since ads are being shown when using Lens, just like when using Google Search, Lens appears to be a new way for Google to show ads. Instead of asking for a query, Google can now ‘see’ the object the user wants information about and shows an ad that has something to do with the object the user took a picture of.

Picture 3

When asked what business targets Google wants to achieve with AR, Aaron Luber, who leads business development on Google’s Augmented Reality/Virtual Reality team, said something that seems to prove the above-mentioned theory:

“The reason we are doing this is, because it is the future of […] immersive computing. […] It is the way people are interacting with their devices going forward. They are talking to their devices using the Google Assistant, they are seeing things through their camera using something like Google Lens […]. When you think of the things that are core to Google, like Search and Maps, […] these are core things that we are monetizing today, that we see as extra added ways, that we can use augmented Reality to shepherd the way people are going to use their computing devices in an immersive way. All the ways that we monetize today will be ways that we think about monetizing with AR into the future.” (Aaron Luber AR in Action)

(15:01 is where the quote begins)

So, by combining AR with core services (Search, Maps etc.) Google is able to introduce their users to new ways to interact with core services and gain revenue from this as these new ways of interaction open up a whole new kind of advertising. This is a great example of what Trottier and Lyon call liquid surveillance (Trottier and Lyon, 92-93).

This leads to the second question I asked at the start of this blog: Is Google becoming an omniveiller? Long story short, yes. But not quite yet.

Blackman describes omniveillance as: “omnipresent, omniscient, digital surveillance in public, broadcasted indiscriminately throughout the internet, without any concern for newsworthiness.” (Blackman, 327) Back in 2009, Blackman made a prediction that Google could become the very first omniveiller, based on an analysis of Google Street View. He saw that Google was able to document huge amounts of data by sending cars that could take 360 degrees pictures of the world (Blackman, 328-331). Google Lens is doing the exact same thing, on a much larger scale where users take the pictures themselves and ‘give’ them to Google, adding metadata like location, without Google having to do anything.

Another reason why Google appears to be an omniveiller is the lack of an editorial board (Blackman, 334). Blackman states that, to become an omniveiller there should be no editorial board that deletes data for whatever reason. Since all Google Lens users sent their information and photos to Google automatically, there is no one who is keeping or deleting content from going to Google.

Blackman sees the semantic web as a part of an omniveillance (Blackman 335). The semantic web is a term coined by Mr. Internet himself Tim Berners-Lee. It means a web where everything is connected with each other and where software agents are able to find answers and solve problems (Berners-Lee, 36-37). Berners-Lee also states that the semantic web will eventually break out of the virtual- and into the real world. Google and all its services seem to come close to what Berners-Lee describes as the semantic web. Google Lens is the first step into the real world, where objects can be recognized by the web and can be used to solve problems or answer questions.

So, why is Google Lens not the last puzzle piece for Google to become an omniveiller? The semantic web is here, there is no editorial board and there is more data than ever before. There is only one thing that is missing which is the essence of omniveillance according to Blackman, and that is: “instantaneous worldwide distribution, indefinite retention, and ease of access”. All the content provided by users of Google Lens goes directly to Google, but that’s where it stops. The content is not available worldwide, but only on the servers of Google, which makes Google powerful, but not an omniveiller. Ain’t that a shame.

References

“Aaron Luber l ARIA”. Youtube. 28.03.2018. 23.09.2018. < https://www.youtube.com/watch?v=NfMrJEa9bvI >.

“Alphabet Announces Second Quarter 2018 Results”. 2018. Alphabet. 23.09.2018. < https://abc.xyz/investor/pdf/2018Q2_alphabet_earnings_release.pdf >.

Apple augmented reality. Apple. 23.09.2018. < https://www.apple.com/ios/augmented-reality/ >.

Berners-Lee, Tim, James Hendler, and Ora Lassila. “The semantic web.” Scientific american 284.5 (2001): 34-43.

Blackman, Josh. “Omniveillance, Google, Privacy in Public, and the Right to Your Digital Identity: A Tort for Recording and Disseminating an Individual’s Image over the Internet.” Santa Clara L. Rev. 49 (2009): 313-392.

Datanyze. 2018. 23.09.2018. < https://www.datanyze.com/market-share/file-sharing/google-drive-market-share >.

Facebook AR Studio. Facebook. 23.09.2018. < https://developers.facebook.com/products/ar-studio >.

Gibbs, Samuel. “Augmented Reality: Apple and Google’s next battleground.” The Guardian. 2017. 23.09.2018. < https://www.theguardian.com/technology/2017/aug/30/ar-augmented-reality-apple-google-smartphone-ikea-pokemon-go >.

Lyon, David, and Daniel Trottier. “Key features of social media surveillance.” Internet and Surveillance. Routledge, 2013. 109-125.

Microsoft Hololens. Microsoft. 23.09.2018. < https://www.microsoft.com/nl-nl/hololens >.

Statcounter. 2018. 23.09.2018. < http://gs.statcounter.com/browser-market-share >.

Statcounter. 2018. 23.09.2018. < http://gs.statcounter.com/os-market-share/desktop/worldwide >.

Statcounter. 2018. 23.09.2018. < http://gs.statcounter.com/search-engine-market-share >.