Framing AlphaZero: An infrastructural approach to A.I.

Man V.S. Machine

It was in May 1997 when world champion chess player Garri Kasparov lost the now infamous chess match to IBM supercomputer Deep Blue. Deep Blue was considered the first artificial intelligence program to win a chess match from a champion human player like Kasparov. It could anticipate Kasparov’s moves by going through possible combinations of moves that it contained in its massive database, enabling it to calculate 200 million moves per second which lent it the capacity to think 12 moves ahead (IBM). Kasparov was baffled and had afterwards accused IBM’s Deep Blue of cheating. 20 years later DeepMind, an artificial intelligence company owned by Google’s parent company Alphabet Inc. announced its newest machine learning computer program AlphaZero, a program that learns by playing matches against itself (hence the “Zero” in AlphaZero), a process known as reinforced machine learning.

The chess match between Kasparov and Deep Blue

The past twenty years machine learning gained ground as the amount of data available for machine learning processes grew exponentially, together with advantages in computer programming and hardware (Emspak, McKinsey and Company). After Kasparov we have seen IBM’s Watson defeat us in the game show Jeopardy, DeepMind’s AlphaGo beat 18 time world champion Lee Sedol in a game of Go and eventually we’ve seen that same AlphaGo being beaten by DeepMind’s newest machine learning program AlphaGo Zero. Time and time again, we are confronted with the sheer indignation and disbelieve in the eyes of those who battled the machines but ended up losing their fight. AlphaZero is DeepMind’s latest invention in machine learning artificial intelligence software. In stead of most of its predecessors, it doesn’t need human data input in order for it to learn: it trains itself. AlphaGo Zero follows the same principle except AlphaZero doesn’t just play Go, it also managed to learn itself Chess and Shogi, a Japanese zero-sum game similar to chess (Mols). Within a day it had beaten all the top players in the fields, both human and machine. Should we be worried?

Artificial Intelligence

Artificial intelligence is hot. Tech companies advertise with their A.I. enhanced services and technology are covering the topic en masse. However, as history has shown again and again, these booms of interests into a new technology are often paired with deterministic future visions that leave little room for for nuance (Schwartz). The ways A.I. is framed is partially the result of business incentives that deliberately use a certain vocabulary over others. We have seen this already happening with the name change of ‘free software’ into ‘open source’ as a branding strategy in the late 90’s (Coleman 65) and the use of the cloud in computing (Holt and Vonderau 75). It is also the result of journalistic media coverage that leave you either very worried, or very optimistic (Naudé).

Media representations of A.I. as uncontrollable

Films like 2001: A Space Odyssey (Kubrick 1968), Blade Runner (Scott 1982) and Ex Machina (Garland 2014) enforce the idea of man v.s. machine in thinking about A.I. and show us the ways A.I. can evolve into something out of our control. Tech journalism seems to only reinforce this image (Schwartz). Big media events where world champions are challenged by A.I. companies to battle their newest machine learning programs like AlphaZero, followed by the camera shots of disbelief and anger of the losing human opponents, only underlines this way of thinking.

AlphaZero

If we look at DeepMind’s description of AlphaZero the company emphasizes AlphaZero’s creativity, intelligence real world problem-solving capabilities (DeepMind). DeepMind’s most recent progress is made in the diagnosis of diseases and energy efficiency in the cooling of data centers (DeepMind). In their published paper AlphaZero is described as a deep neural network that uses the Monte Carlo tree search algorithm that helps it search and categorise probable moves in a very effective way (Silver et al. 362).

Alphabet’s DeepMind, the company behind AlphaZero

The ways the company frames AlphaZero and A.I. in general is what new media scholars Johnson and Verdicchio describe as a discourse reinforcing the autonomy metaphor that leads to miscommunication and fear among non-experts, like the writer of this blog (Johnson and Verdicchio 576). AlphaZero learns by playing and using its deep neural network efficiently, does not need any humans to provide it with data. This image coincides with the ways A.I. is often represented online, as a brain that exceeds the human brain capacity (look up artificial intelligence in Google image search and you know what I mean).

A.I. Infrastructure

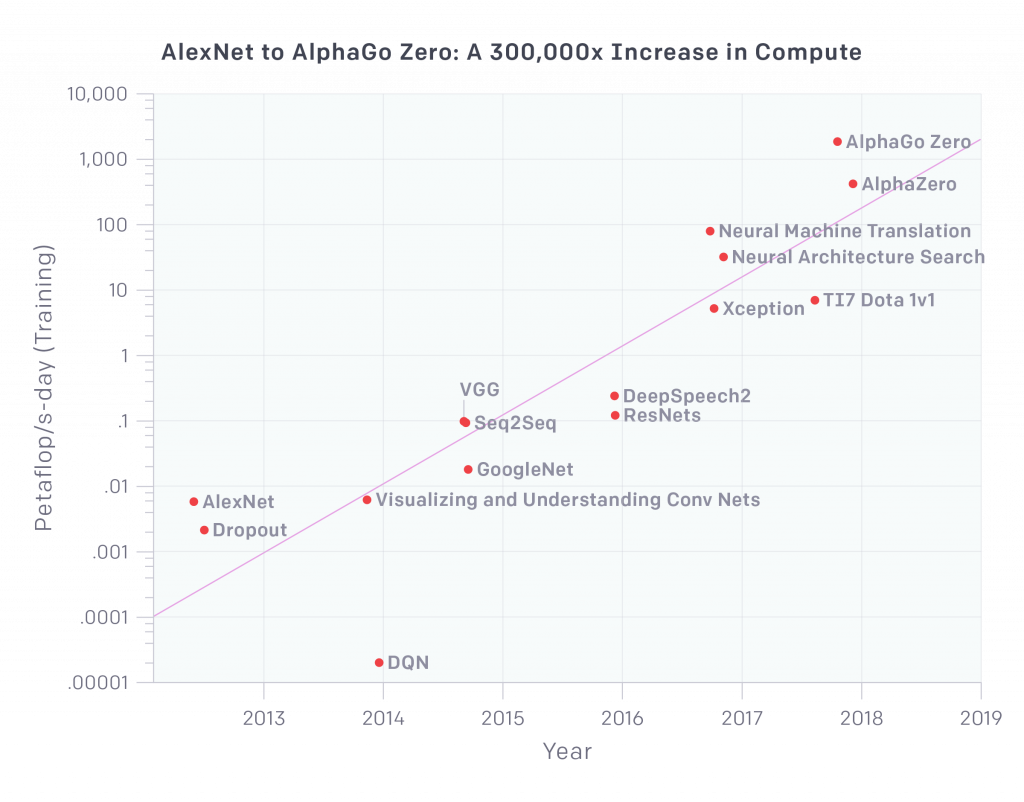

However, A.I. isn’t some abstract aerial entity, as recent research into training models for deep learning in natural-language processing (NLP) (you know, the technologies that are also used in your phone, car or Google Home) show, A.I. training consumes enormous amounts of energy due to the amounts of computational resources that are required for the training of a program that uses deep learning processes (Strubell et al.). A training process for an A.I.-model can emit more than 626,000 pounds of Carbon Dioxide which is the same amount of emissions as the lifetime cycle of a car times five (Strubell et al., Hao).

Googles TPU processors

In a 2017 NIPS Keynote, one of DeepMind’s leading researchers David Silver explained that the models showing the learning curve of the AlphaZero as they ran its training model could only be produced once as running the program was very expensive and used a high amount of TPU (a by Google developed processing unit) on Google’s Cloud Computing Services. Scholars have already pointed out how the notion of the cloud is a marketing concept that abstracts the physical infrastructure that lies beneath (Holt and Vanderau 72), and start to realize we need more materialistic approaches to new media (Goddard 1762). If we look at the infrastructures that lie beneath A.I. we find huge computational forces that require large amounts of energy and human input. A.I. consists of computing capacity, storage capacity, a networking infrastructure, security and cost-effective solutions for it to work (Hofstee), and a recent analysis of consultancy bureau McKinsey & Company states that companies will need a strong digital base in order to keep up with A.I. competition.

What’s next

A.I. has been around for a long time and is already used in many applications around us. AlphaZero is not a ghostly human-like entity, it is cables, servers and algorithms powered by electricity and humans. Within a day it was trained to beat the best zero-sum players in the world but it did so with extreme computing power. Had AlphaZero used only one processor it would have taken it a few years to reach that level (Mols). It benefits from Google’s enormous infrastructure, financial capacities and knowledge. Changing perspectives to the platforms and infrastructures that lie beneath A.I., may be a more constructive way to think about it. Google has just completed their controversial takeover of DeepMind Health which focusses on the use of A.I. in predictive diagnosis (Lomas). Looking at Googles powerful infrastructure and database of knowledge, maybe A.I. isn’t what we should be worrying about.

References

“Cloud TPU.” Google Cloud. https://cloud.google.com/tpu/?hl=nl. Accessed 19 Sept. 2019.

Amodei, Dario, and Danny Hernandez. “AI and Compute.” OpenAI. 16 May 2018, https://openai.com/blog/ai-and-compute/. Accessed 23 September 2019.

Bughin, Jacques, and Nicolas van Zeelbroeck. ‘AI Adoption: Why a Digital Base Is Critical’ McKinsey Quarterly. 2018. McKinsey & Company. https://www.mckinsey.com/business-functions/mckinsey-analytics/our-insights/artificial-intelligence-why-a-digital-base-is-critical. Accessed 21 Sept. 2019.

Coleman, E.Gabriella. Coding Freedom: The Ethics and Aesthetics of Hacking. New Jersey: Princeton University Press. 2012.

Deepmind AlphaZero – Mastering Games Without Human Knowledge – YouTube. https://www.youtube.com/watch?v=Wujy7OzvdJk. Accessed 21 Sept. 2019.

Hao, Karen. “Training a Single AI Model Can Emit as Much Carbon as Five Cars in Their Lifetimes.” MIT Technology Review. 2019. https://www.technologyreview.com/s/613630/training-a-single-ai-model-can-emit-as-much-carbon-as-five-cars-in-their-lifetimes/. Accessed 19 September 2019.

Hofstee, Eltjo. “What Are the Infrastructure Requirements for AI?” Leaseweb Blog. 2019. Accessed 22 September 2019. https://blog.leaseweb.com/2019/07/04/infrastructure-requirements-ai/.

Holt, Jennifer, and Patrick Vonderau. “Where the Internet Lives.” Signal Traffic. Eds. Lisa Parks and Nicole Starosielski. Urbana, Chicago and Springfield: University of Illinois Press. 2015. 71 – 93.

Johnson, Deborah G., and Mario Verdicchio. “Reframing AI Discourse.” Minds and Machines. 27.4(2017) 575–90.

Levy, Steven. “What Deep Blue Tells Us About AI in 2017 | Backchannel.” Wired. 2017. Accessed 22 September 2019. https://www.wired.com/2017/05/what-deep-blue-tells-us-about-ai-in-2017/.

Lomas, Natasha. “Google completes controversial takeover of DeepMind Health.” TechCrunch. 2019. Accessed 23 September 2019. https://techcrunch.com/2019/09/19/google-completes-controversial-takeover-of-deepmind-health/.

Mols, Bennie. “Binnen één dag speelt AlphaZero iedereen onder tafel.” NRC. Accessed 19 Sept. 2019. https://www.nrc.nl/nieuws/2018/01/19/binnen-een-dag-speelt-alphazero-iedereen-onder-tafel-a1589037.

Schwartz, Oscar. “’The discourse is unhinged’: how the media gets AI alarmingly wrong.” The Guardian. 2018. 23 September 2019. https://www.theguardian.com/technology/2018/jul/25/ai-artificial-intelligence-social-media-bots-wrong.

Silver, David et al. “A General Reinforcement Learning Algorithm That Masters Chess, Shogi, and Go through Self-Play.” Science. 362.6419(2018): 1140 – 1144.

Strubell, Emma, et al. “Energy and Policy Considerations for Deep Learning in NLP.” arxiv.org. 2019. https://arxiv.org/abs/1906.02243v1.